FutureCon Chicago Cybersecurity Conference 2026

Once again, HALOCK Security Labs and Reasonable Risk will be partnering up at FutureCon to provide helpful industry insights. This year, Chris Cronin will be touching on the subject everybody is talking about in 2026: AI.

Artificial intelligence (AI) can generate cybersecurity risk assessments in seconds—but speed and confidence don’t equal accuracy, accountability, or defensibility. In this session, Chris Cronin exposes why AI alone can’t manage cyber risk, how overreliance can make organizations more vulnerable, and where human judgment remains legally and operationally essential.

DATE: Thursday, January 29, 2026

WHERE: Live In Person | Virtual | Hybrid @ Chicago Marriott Oak Brook

CREDITS: Earn up to 10 CPE Credits

Session Recording

Why AI Can’t Fix Your Cyber Risk (and Might Be Making It Worse)

Speaker: Chris Cronin, ISO 27001 Auditor | Partner, HALOCK and Reasonable Risk | Board Chair, The DoCRA Council

Why AI Can’t Fix Your Cyber Risk (and Might Be Making It Worse)

Since the release of ChatGPT 3.5 in 2022, AI (artificial intelligence) has become the default answer to almost every cybersecurity problem—including risk assessments. AI and large language models (LLMs) can generate polished, confident-looking risk analyses in seconds. The problem? Confidence is not competence, and speed is not accountability. When used as a substitute for human judgment, AI-driven risk assessments can obscure real exposure, misrepresent priorities, and create a dangerous illusion of control.

In this session, Chris will demonstrate why AI is fundamentally incapable of managing cybersecurity risk on its own—and how overreliance on AI can actually increase organizational risk. Attendees will see where AI outputs break down, why “AI-generated” does not mean “defensible,” and how regulators, auditors, and courts still expect human decision-making grounded in reasonableness.

Chris Cronin, creator of the Duty of Care Risk Analysis (DoCRA) Standard, has advised governments, courts, Fortune 100 companies, and startups on cybersecurity risk analysis and regulatory compliance. His work centers on helping organizations make risk decisions that can be explained, justified, and defended—not just automated.

Chris will reveal the simple rule Reasonable Risk uses to decide when AI belongs in their SaaS platform—and when it absolutely does not. Attendees will leave with a clear framework for using AI as a supporting tool rather than a decision-maker, and a practical understanding of how DoCRA principles are shaping AI, cybersecurity, and privacy laws around the world.

About Our Speaker

Chris Cronin is a partner at HALOCK Security Labs and at Reasonable Risk. He is also the Chair of the DoCRA Council, a nonprofit that promotes the use of reasonableness in cyber risk analysis and law. He is the principal author of the DoCRA Standard and CIS RAM, the Center for Internet Security’s Risk Assessment Method. Chris works with organizations of all sizes and serves as an expert witness in post-breach cases. Chris’s current focus is on helping organizations use the new demand for governance to their advantage.

Learn how you can efficiently and effectively manage your risk program with Reasonable Risk, the only GRC SaaS tool with a Proven Governance System™.

AI Risk Transcript

Thank you. Hello, everyone. Welcome. I really appreciate you coming out here in single-digit Fahrenheit weather. Well done. Thank you.

So, yeah, I’ve been with HALOCK Security Labs for about sixteen years, and we’re talking about today, which is the reasonable risk application that we built and how we made decisions about how to put AI into that application as an example of how to do the risk analysis that you need to do now with AI. So if you’ve ever seen a photograph of a person holding a photograph of the photograph that they’re holding, this is that kind of talk. So, has anyone seen or at least heard of the CEO of Anthropix’s 40-page warning about AI risk?

He presented it at Davos, and he’s been talking about it ever since, but he’s the CEO of Anthropic, and he’s basically said in this forty-page article, oh my god, what are we doing?

And can I please get more investments so we could do more of it? It’s a bizarre letter. But what’s happening is that organizations are realizing that AI is an extraordinarily powerful tool. They know there’s risk, but they don’t know how to manage it.

I really appreciate the talk we just had with Mariana, who is telling us how governance works. This is the kind of talk that we need to hear. We’re gonna start to talk about a specific kind of analysis. So people know me because of my association with reasonable risk, HALOCK, or Duty of Care Risk Analysis (DoCRA). I know many of you are using Duty of Care Risk Analysis because it’s just become the law in so many places, most recently in the EU. We’ll talk about that as a standard.

We’re gonna talk today about AI in business, AI in governance, risk, and compliance, and then how Reasonable Risk GRC solved this decision-making dilemma. Again, we had a risk management tool; they need to determine how we’re gonna put AI into the system. We’re gonna show you how we did that thinking. We used DoCRA for that. Alright.

Next, the tale of two memes. How many of you have seen this meme? I love this meme.

This is great. Who are we? CEOs. What do we want? AI. To do what? We don’t know.

When do we want it? Right now. Right? Fear of missing out. Right? We know it’s supposed to do something.

For those who are new to Duty of Care Risk Analysis, here’s a meme. We’re breaking it into three slides. Risk analysis is about likelihoods, and it’s about impacts.

It can be quantitative, or risk analysis can be qualitative.

But we cyber risk analysis misses the point if you forget the risk you impose on others. That’s the nature of Duty of Care Risk Analysis. When you make a decision, who’s going to potentially be at risk because of the decision you made? When you’re doing Duty of Care Risk Analysis, you’re thinking of a few things. One, how am I sure that the balance is right between the thing that I want to do and the harm that I can impose on others? So when you’re looking at both parties, you have regulators and litigators and executives, who say that makes sense.

If you’ve seen the movie Erin Brockovich, it’s a way to not be PG&E. It’s a way to be Erin Brockovich. And we found that of the hundreds of companies we’ve worked with, it’s one hundred well, it’s maybe ninety-nine point eight percent universally loved by executives, technicians, and their attorneys because everyone gets what they need in that risk analysis.

So when we talk about AI risk analysis, we wanna talk about what benefit we know AI gives us and some of the risks that we’re concerned about. So you can start by coming up with a list. These are a fairly standard list of things you’ve seen a number of times before. You can increase efficiency.

You can have it faster, it’s funny. Faster intervention is supposed to mean faster invention, but intervention works really well, too, when you’re blocking attacks. That’s a human-coded error. That is not an AI error.

Creativity and innovation, rapid problem solving, hyper-personalization, risk reduction, we hear about all these things as really great things we can get from AI. But AI (artificial intelligence) also has risks.

Algorithmic bias and discrimination, IP infringement and data leaks, lack of accountability, having the machine make the decision for us, regulatory comply noncompliance can be a risk, job displacement, false information, persuasion and disinformation or with disinformation, rapid development of threats, we’ve seen this, Domino effect agentic failures, but an agent trusted an agent trusted an agent trusted an agent. Cascading effects can be really bad. Generic responses when creativity is needed, we’ve seen that out of AI, plenty. Right?

And environmental costs. These are all things that come up as risks.

So when we hear what are we going to do about risk management and governance for AI, we think, well, okay, there are two standards out there for risk management of AI. That’s true. If you read the NIST and the ISO guidance or the standards for AI risk management, you see they miss one thing: risk analysis. How do you actually analyze the risk? It tells you how to manage the risk that you’re going to determine, doesn’t tell you how to determine what the risk is. We don’t yet have a standard for AI risk analysis.

So when we talk about DoCRA, if you’re not familiar, DoCRA is do your risk assessment any way you want, the qualitative, the quantitative, any way that we can make that possible. Just make sure you’ve achieved these three principles.

Risk analysis must consider the interests of all parties that the risk might harm. That’s you, it’s your customer, it’s your employees, it’s in it. Anyone who can perceivably be harmed, you’re thinking through that kind of harmony risk assessment.

Your risks must be reduced to a degree that would not require a remedy to any party. In other words, your goal is to make sure that no one gets hurt, you, your customer, your employee, your investors, the public, etcetera. And safeguards must not be more burdensome than the risks that they protect against. This is the one your bosses love because what you’re able to do is say, you’re not supposed to invest more than this.

They don’t typically hear that from you, do they? All of a sudden, you become very familiar and warm and loving to them because you’ve said your limit for spending is x.

But this is actually now built into laws all across the land and in the EU. I will talk about that in a minute. So DoCRA is a cost-benefit test.

All US states and DC have used DoCRA in one way or another, or DoCRA’s principles in one way or another, to achieve the balance that you get that you need to get if you’re going to have a reasonable or acceptable risk.

It’s the basis of the new CCPA regs. Has anyone seen the updates to CCPA? You now not only have to do risk assessments and audits, but you also have to report to their regulator, the CPPA, what your risks were. That’s pretty amazing.

But they’re saying they’re talking about the cost-benefit test that you just saw with those principles we described. And Etsy, which is shorthand for NIST in the EU, they explicitly say you’re gonna use DoCRA for your risk assessments for the AI act, for the CRA, and for other security and privacy rules. So now there’s a reason why this principle, we’re gonna get to it in terms of how you do this risk analysis for AI, but I wanna be sure everyone’s level set on why we’re using this duty of care risk analysis for AI risk analysis. Okay.

So in the United States, the reason why DoCRA was brought in and accepted by regulators so broadly was because it defines this term, reasonability, that’s been in regulations forever.

HIPAA security rule, you find the regulation, reasonability is the goal, and this is a clear way to define what reasonability is. Right? In the EU, what they say is that it solves a problem of balancing innovation with the protection of the public. As they’re saying, you can innovate as a company and protect the public at the same time. This is why we’re accepting Duty of Care Risk Analysis as the standard for risk assessments here.

Now, what we’ve seen and the reason why we invented DoCRA to begin with, this is an invention of HALOCK’s that we give out to the public, so there’d be wide adoption and the adoption’s taken. Our clients were in this situation, where this is an example of an org chart of a hospital, where every department in the hospital was operating in a way that the business understood. Every top-level executive in one department understood what the other departments needed. They understood each other, but cybersecurity was always the orphaned one. They weren’t speaking the language of business.

What they were doing was giving maturity scores. They were saying, my consultants came in, and they gave me a maturity score, and I’m this many, and I’m supposed to be this other many, which is a bigger number, and I need five hundred seventy five thousand dollars to do it.

That’s not talking the language of business. If your consultants are coming to you and say, let’s do a maturity assessment, you have to realize that when you walk into a board meeting or an executive meeting with a maturity score, if you’re not mature, you’re what? You’re immature. You don’t want to put that name on yourselves.

You want to be able to speak just like all the rest of your peers. What other department goes to the executive board and says, my maturity score is two point seven?

None of them do. So stop doing that, and it’s time to start moving on to risk.

Now, this is a learned process. Risk management and governance are they’ve been talked about in standards and regulations now. You’re supposed to be doing this. You’re supposed to be running cybersecurity the way the rest of the business operates, every other part of the business. But this is a learned process. You have to learn this over time, and we basically chart it out like this.

But if you’re doing this inside this application, Reasonable Risk, what you’re able to do now is start to operate that organization the way every other department does. Every other department has your people’s processes and technologies to communicate where you are and where you have to go. When you get your top-line data, it doesn’t come out of an Excel spreadsheet. It doesn’t come out of a series of Word documents where someone wrote what they thought about risks. It’s coming out of an application. So what the application does is it says, let’s make sure that every level of the organization understands its role in governance, like what Mariana was just talking about. Everyone needs to know what their role is, and this will actually guide you through that process.

It will give you information that goes to executives, so executives know through processes that we’ve developed, the HALOC side, the consulting side said, we know that with just these handful of key risk indicators, we can teach executives how to have conversations about cybersecurity and privacy in a way that helps them make an informed decision, even if they’re experts.

So these are all embedded into the application.

So why do I bring this up? Well, Reasonable Risk was asking. If we’re trying to make this process efficient for our clients so that they’re learning this new set of skills, this new capability, and we’re helping automate it so that they can do this really well, AI has got to be able to help somehow.

But we can’t be like that crowd at the beginning that said, what do we want? AI. When do we want it now? Like, what do we want it to do?

We have no idea that we’re not going to be a FOMO company. We’re going to think about AI in terms of its risk because that’s our business. We’re risk analysts. Right?

So here’s what we were faced with: a new set of features that we thought we could that we could develop through risk analysis, analysis, but we had to have a way of determining what we going to let in, what we are we gonna keep out. And we developed this algorithm to help us answer the question because we ask this set of questions every time. You’re gonna see this over and over again during this talk. But this is a systematic way of saying, am I ready for this thing that I’m going to be doing?

Am I creating too great a risk?

What this is basically doing is, in purple, we’re saying, I’m thinking about taking on this kind of AI tool to do this kind of business use.

I’m gonna ask, is this foreseeably going to violate a prohibition? Is it gonna break one of those risks that I listed?

If it can, I have to then ask, is there a safeguard that can reasonably reduce that risk while giving me the benefit?

That’s the DoCRA, that’s the second the second diamond, the DoCRA diamond. This is where the DoCRA risk analysis is done. And depending on your answers to those questions, you’re either not going to do that AI thing, you’re going to do it with the safeguard, or go ahead and do it because we don’t see any risk here. Right?

So, just as a quick reminder, these are the business benefits: increased efficiency, faster intervention or invention, creativity and innovation, rapid problem solving, hyper-personalization, and risk reduction. These are things that we can do.

And these are the prohibitions. These are the lists of risks that we call prohibitions for the test. Algorithmic bias and discrimination, IP infringement or data loss, lack of accountability, regulatory compliance, and job displacement. These are all things that we’ve heard about. Right? This is the list we’re going to go down as our list of prohibitions.

So, on the left of this table, these are the things we had in discussion to say: are we going to use AI to help bolster these capabilities and make them simpler for our users? Again, Reasonable Risk is there to show organizations how to learn this new skill of risk management and to execute with risk management and measurably show risks going down so that executives know the role that they play in this. The simpler that can be, the better off everyone’s gonna be. Right?

So let’s ask, can we automate the definition of risk assessment criteria? Those who know DOCRA know this is the secret sauce. This is what gets the lawyers, the regulators, the executives, and the cybersecurity people to all sing Kumbaya together.

How about if we automate risk likelihood estimations? Have AI help us figure out the probability of problems?

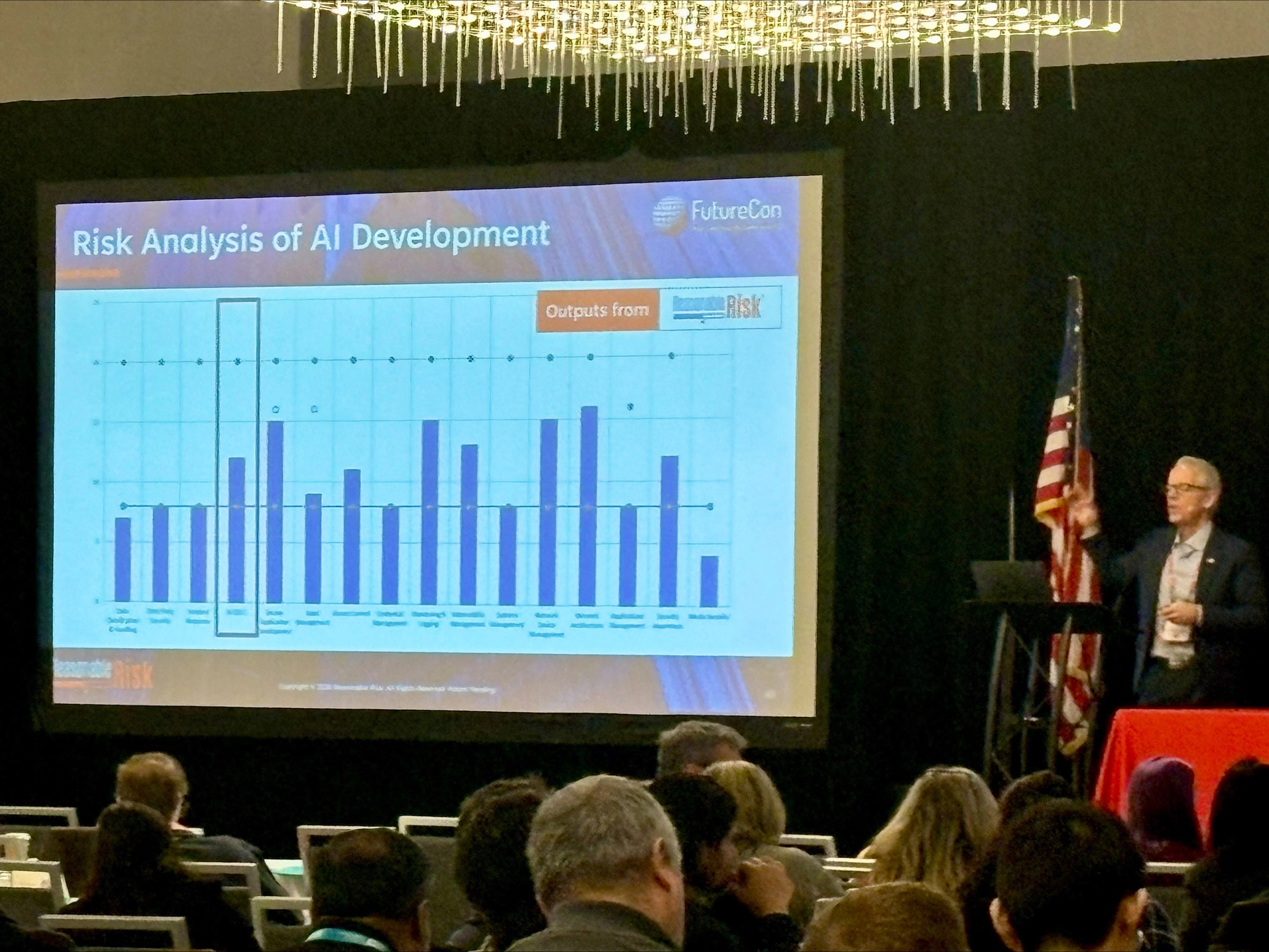

Let’s mark that out. Let’s work that out to see. How about risk analysis of AI development? What if we’re a risk assessment tool?

What if we actually use this to analyze risk development and AI development? How about if we use it to talk about AI alignment? Who’s aware of AI alignment? And by the way, if you see things dimming, it’s because, by proportion, I’m getting brighter.

It’s a common effect when I speak.

So, so AI alignment is this really kind of interesting thing. Remember, I talked about the CEO of Anthropix forty page complaint about the risks of AI, but we’re doing it anyway?

That’s a lack of alignment. They’re doing something against their own ethics, but they can’t stop. That’s a frightening position to be in. We wanna be sure you’re not in that position.

Automatic risk management skills development. This is a key one.

If we’re telling the public that you’ve got to now be really good at cybersecurity risk management and AI risk management and privacy risk management, but we haven’t developed a culture for that yet, if we can get AI to help there, holy cow, that would be really great, but we have to see that it’s worth the risk. And then automating executive communication. Wow. If we can figure out a way for AI to communicate with executives, that’s golden. Alright. So we’re gonna be using this risk assessment criteria to figure out whether we can have AI help us with the risk assessment criteria. So the risk assessment criteria is where you say, I’m gonna look at every risk the same way in terms of what matters to the business and what matters in terms of how I can hurt other people, and I’m gonna define acceptability this way.

Right? Can we use AI for that? Well, yes, you can. So I used a few LLMs and a few tools, and found that Gemini made a risk assessment criteria document that looks like you’re not expected to read this. I’m just showing you that words popped up in a chart. But I read up, and I said, hey, this isn’t a terrible first attempt.

Right? And I was able to get it done in five minutes. Typically, what we do is we bring someone through a ninety-minute session to help them learn how to do this. We encoded a process into a free document called CIS Risk Assessment Method, or CIS RAM.

You can download that at CIS. There’s an exercise that helps you do this, too. But it’s a way to get executives and technicians and maybe your general counsel to agree on how you’re going to evaluate risk and determine acceptability. That’s what that is.

So here’s the problem.

When we ran AI to do this, it came up with really quick risk assessment criteria. The problem was that if executives aren’t involved in the definition of risk assessment criteria, there’s no accountability.

A machine told us what we should be accepting in terms of risk. Uh-oh. That’s not governance. That’s not accountability. Regulatory compliance. What if we say it’s okay to accept a risk that puts us in violation of a regulation?

By the way, which is exactly what your maturity scores are doing by the way, don’t run governance by maturity scores. Don’t ever say that three point two is okay when governance tells you that you should be testing your controls, which is a four.

False information. Right? You can get false information about what your business does if you rely on AI to define your risk assessment criteria right away. And we couldn’t think of a way to figure out a safeguard for this.

We had a ninety-minute session in person, a free method at CIS. There’s no safeguard that’s gonna make an AI do a really good job with risk assessment criteria in a way that covers your bases. Immediately, don’t do it. So the test failed on the side of risk, and we decided no.

No AI for that issue.

Let’s take a look at automating risk likelihood estimations. That would be great if AI could help you figure out the probability of cybersecurity attacks. I hope everyone in the room sees where I’m going. That’s a little science fiction y.

Right? Okay. Glad we’re all there. So the benefit of that is letting AI models figure out the probability of cyber risks.

The risk here is inaccurate estimations.

So if you look at Reasonable Risk and you look in Center for Internet Security’s risk assessment method, you’re going to see that you have an automatic alignment. In reasonable risk, it’s it’s industry based. So you could say, if my controls are this robust and I’m in x industry, my likelihood of this being an issue for me will be x. And that’s automatically determined because we have the industry data about what’s causing incidents, and we have an understanding of how you how how a certain safeguard can reduce the likelihood. So it’s an automatic calculation.

We ran a method through a number of AI models, and we found that the more we asked the same question over and over again, the wider the variety of results came about as a scatter plot. This is highly variable. Right? It was doing a terrible job estimating risk and just got worse the more you asked it.

So zero regularity. So, your risk of using one of the tools that we tried, AI, to help you figure out the likelihood of an attack, you’re just gonna get false information. Is there a safeguard we can think of for that? Well, no.

Just go back to the method that you had before having a simple slide rule. How common is something gonna happen based on your industry, and what is the strength of your controls to prevent it? That should let you know the degree to which you could expect that thing to be a problem for you. Alright.

Now we’ve got another no.

Alright. So now this next one, yeah. So we got that no. Okay. So this next one i,s what if we can analyze AI development risk for other people?

This is right in our wheelhouse. So the same way we would analyze risk for software development life cycles, for network configurations, for anything, we could do the same thing for AI development. But what we need is a standard of care to say, what should you be doing?

And in this case, the standard of care comes to us from OWASP. I don’t know if you’ve seen the AI development version of OWASP top ten risks. They do a great job. Right? You would go to OWASP for guidance on any kind of software development risk, and they provide that. Well, we could use this in our likelihood model by saying how capable my SDLC for AI development is against the commonality of the threats that are being created. OWASP gives us a lot of great data for that.

You can even report out.

This is one of the charts that Reasonable Risk. It’s able to very quickly say, of the control areas, am I most concerned about based on how it compares to my acceptable level of risk? The acceptable level is that green line.

But I can now look at AI risk compared to any other risk based on how it measures up to the commonality of attacks versus the standard of care, just like every other control.

We could not think of a single violation of the prohibition here.

We just went down that list and said, I can’t think of breaking anything.

But that’s the risk analysis, and it allowed us to say, just do it. There’s no reason to have a safeguard. Just do it. So we had we were able to fail on the benefit side with this test, not fail, but, you know, side on the side of the benefit.

Now we can add AI SDLC. So what are we doing? We’re starting to say AI is a tool. AI presents risks.

It’s not magic. It’s not intimidating.

It is intimidating the heck out of the CEO of Anthropic. But you just need to pause and say, what am I really trying to achieve here? What is the risk of that going wrong? Who can I hurt? Can I put a safeguard in place to make sure I don’t hurt them? If so, then I’ll try it. It’s pausing.

It’s putting your conscience into what you’re doing. Right? Just systemically, so you always get the right answer.

AI alignment. This is so interesting to us because when you look at AI alignment, this seems a perfect fit for Duty of Care Risk Analysis. So, before we were doing Duty of Care Risk Analysis well, I’ll say, before I was at HALOCK Security Labs, and we were doing risk analysis anyway, I was an internal auditor. And before being an internal auditor, I was doing operations. I went on an internal audit because of Sarbanes-Oxley, and I really loved sort of coaching my team members to say, this is what we’re going for and this is what we’re doing now. How do we find a reasonable way to get to where we need to go? But I didn’t know how to say what reasonable was.

What was interesting about Duty of Care Risk Analysis is that you appeal to people’s better natures, and you say, what can we be? Who do we want to be? Who do we expect the world to need us to be? How do we protect our interests as we are good neighbors?

And once you state things that way in the duty of care risk analysis and lay it out systematically, you’re gonna find everyone just gets right on board because every time you do a risk analysis, you say, I’m not here just to protect my bottom line. I am protecting that. But I’m also clearly protecting the people who could be hurt if I don’t do things right. You would be just your heart will grow two sizes bigger when you’re in an executive boardroom, and they go, that’s how we want to manage this organization.

And it’ll grow even more when a regulator comes in and says, that’s what we mean by reasonable. And you’ll faint right out when a plaintiff’s attorney says, I’ve got no case against your breach because you clearly have achieved reasonability. That happens.

So now, with AI alignment, the issue here is we don’t have a standard of care. Has anyone read this book, Careless People? It’s about the marketing, the head of marketing for Facebook, now Meta, and how she saw executives just go for dominance and not care about human beings in the process. There’s a moment in there when they’re deciding whether or not to go into China, and they knew their business would go get huge, but China demanded access to the information of people posting on Facebook.

Chinese citizens, even if they live outside of China. And Facebook was about to say yes. They did a risk analysis. And they agreed that they would allow people, dissidents, to be tortured and killed as long as it allowed them to move into China and make money there.

They had other reasons why they couldn’t do it. But when you watch how that risk assessment method went, you’ll see the arbitrariness. You’ll see that they were saying, well, we don’t want to say killed. We’ll just say political disagreement.

Okay. That’s the risk. Political disagreement. Okay. Let’s go to China.

It was the flexibility, the amorality of their risk analysis that had to make what could be a deadly decision for their users.

It’s a really interesting book to read, but that moment really captures it. You cannot have arbitrary risk analysis because it’s gonna get you to go down the wrong road.

So we don’t wanna say, let’s do AI alignment risk analysis if we don’t have some method for doing it.

Because what will happen? You’ll have a regulatory compliance issue. You’ll have false information. You’ll have generic responses when creativity is needed.

These are things that are easily gonna violate.

But we said, what if there’s a safeguard? What if there’s a way for us to make sure that we don’t cause these violations? And we said, let’s apply DoCRA to AI risk analysis, which is what we’re doing now. We’re working with our clients to say, let’s talk about what matters to you, what matters to the people you don’t want to harm. Let’s talk about the innovation, the invention, the benefits that you want to bring, and figure out whether or not that creates an unacceptable impact. And if it does, what safeguard do we put in place that is reasonable? It balances the interests of your needs for innovation with the harm that you could cause others.

Right? So that’s what we’re doing now. We’re at a point where the only way we can do this is to build that method. So if you’re interested in knowing how this happens because this is all kinda new and we’re working with people to do this, just see me at the reasonable risk table, we can show you how. We’re gonna use Docker to create a new standard. That’s the way we’re handling this.

When you go through these other these last two a little more quickly because this one was really interesting to us. Remember when we said risk management and governance are new skills that take time to learn, you have to. You’re supposed to be by law now. So how do you do this? Well, we said, what if there’s an AI way for us to do that, to help people learn quicker?

And we said there are a couple of ways we can do it. We can use public LLMs. We can use a private DoCRA-based LLM.

If we use the public LLM, we have an we have a concern with IP infringement and data leakage because people are putting sensitive information into the queries.

We have a concern about regulatory compliance. People are feeding information about what governance, and it comes out with this really bad answer that people follow. You can get false information similarly. You can get generic responses where creativity is needed. Why does that matter? Because AI, for the large part, is looking at what already exists, and we’re trying to create something new.

But what if we used a DoCRA-based LLM? That’s interesting. Because if you do that, then you’re able to say, I’m limiting the risk in a way that I can’t think of a way to create a pro to prohibit a rule. That’s pretty awesome. So we decided the safeguard will be we’re going to use a DoCRA-based LLM so that when people are using the Reasonable Risk application, they’re using the application, but they can query it to say, how do I determine what a reasonable safeguard might be if I can’t use the one that’s the standard of care? How can I say this to my boss so she’ll understand and get me the budget that I need?

So now that’s a capability of reasonable risk. What did we do? We decided, yes, use AI for that because we can’t think of how that would violate a prohibition if we use the safeguard we developed.

Finally, executive communication.

When Haylock came to market, we were working on this duty of care stuff. We were working with our clients, sometimes implementing ISO 27001, sometimes implementing the NIST risk management framework. And what we were doing is we were saying, there’s a process of communication that we had to do, and ultimately, executives have to be involved. Executives have to make informed decisions even if they don’t know the technology. What are the right key risk indicators we can put in front of an executive so they know in truth what’s happening, and so that they understand their role in reducing that risk?

And we found a few set of KRIs that when you are operating them to operating them to them in a discipline, you get the reactions, the responses, the integration, the governance, the risk management, the measurable risk reduction that you need.

So we said, okay. If we’ve got that and it’s been proven out, what can we do with AI? Can we use AI tools to help us figure out how to communicate with executives? And we tried.

And what it came up with was something like Will Smith eating pasta with two hands. It’s still at that stage. We’re not there yet. So we were able to say through that test, don’t use AI to try to figure out how to communicate with executives. Rely on what you’re doing now.

At the end of the day, and this is what’s really interesting about all this, we said no to AI for that. But what we do in the right column, when we talk about our services, we do not say this is AI-powered.

We do not say this is a yes; we use AI. It’s what we don’t say, it’s Reasonable Risk dot AI. Why?

Well, it seems a little silly to, you know, go to people and say, hey, we’re in the cloud. Did I tell you we’re cloud? Or hey, we’re a dot com for those who have gray hair. Remember how painfully cringey it was to have people say, we’re a dot com.

So we’re not gonna go too far forward and say, we use this tool. We’re just going to tell people the benefit that they get out of reasonable risk. We knew the decisions that we had to make based on the risk of harm that we could cause other people and the benefits that we wanted. All the marketplace has to see is the benefit that comes to them when they use that combination of tools and processes.

So, AI risk is necessary to take on, but you can manage it the way you manage any other risk. If you think about it like any other risk and put it through this kind of heuristic that we showed you or the algorithm that we showed you, you’ll get a good sense of how to place it in your organization. And if you wanna figure out how to do it in your organization, just come talk. We are happy to talk about this stuff.

We live it and love it, and we’re happy to pick your brain too. So thank you for attending. Thank you for coming out in the cold, and find me in the halls if you wanna have a chat.

Panel Discussion Recording

Panelist: Terry Kurzynski, CISSP, CISA, PCI QSA, ISO 27001 AUDITOR

More about the Reasonable Risk GRC SaaS Tool