AI Risk & Security Resources

Organizations are deploying AI at scale, often outpacing their risk programs in the process. From productivity software copilots to foundation models embedded directly in customer journeys, AI is quickly moving from research and development projects into production use. But where use cases go, risk follows.

Cybersecurity and compliance programs don’t currently cover emerging risks from AI technology. AI risk includes more than data protection. Its use can impact decision integrity, introduce unintended consequences, and raise new questions about accountability. Models can hallucinate. Outputs can be biased. IP can be exposed. Autonomous or agentic systems can create self-reinforcing errors at scale and machine speed. This risk can go unnoticed until it’s too late, affecting your customers, regulators, and business. Organizations will soon be required to demonstrate that they use AI reasonably, justifiably, and with appropriate governance.

This leads to a fundamental question: How can organizations move fast with AI—without exposing unmanaged, indefensible risk?

Watch our webinar on AI Risk Management.

Speaker: Steve Lawn

AI Cybersecurity Resources

WEBINAR. Approaches to Managing AI in the Enterprise: A Practical Guide to Governing Native AI, Browser-Based AI, and Third-Party AI Tools.

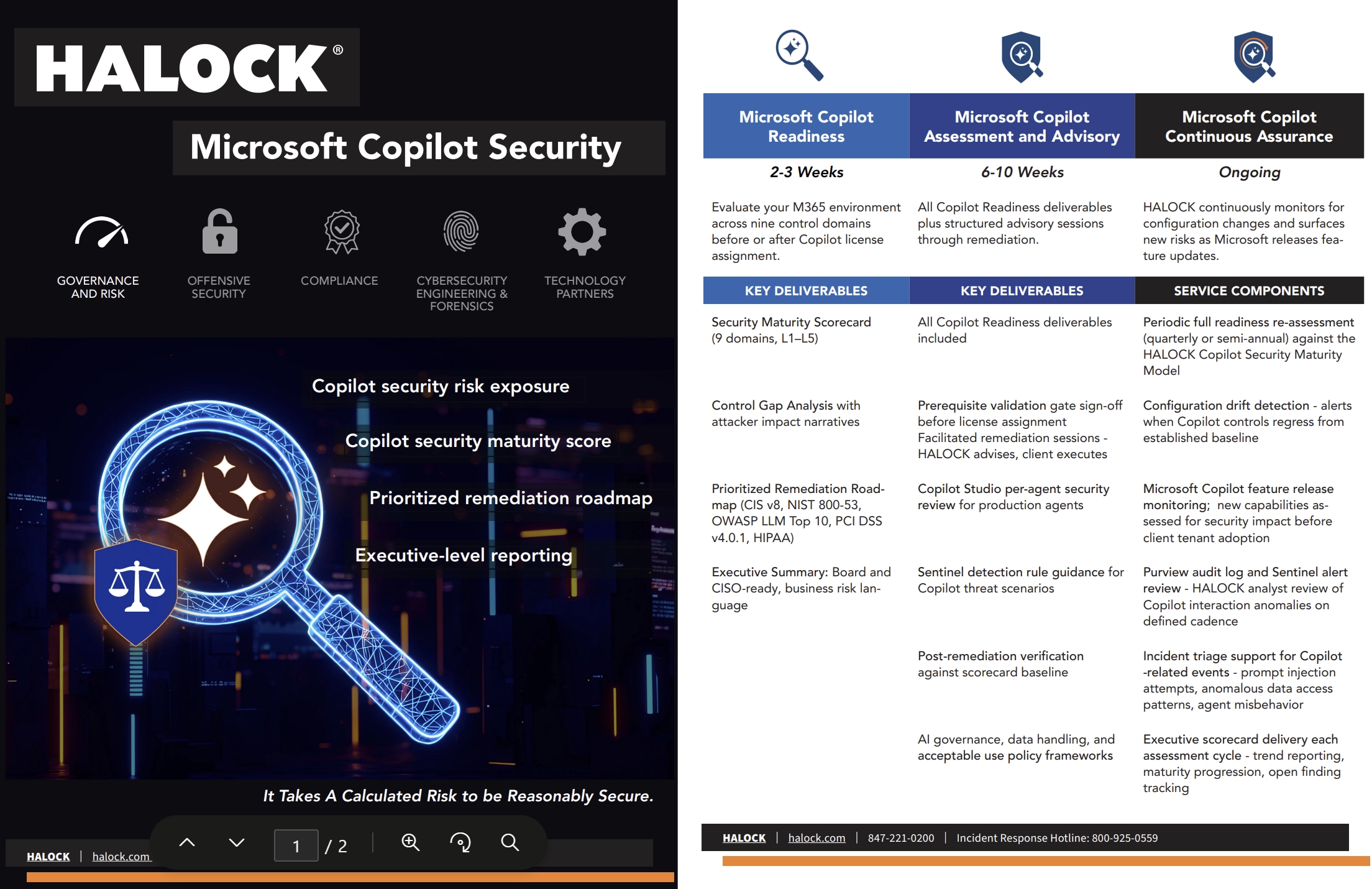

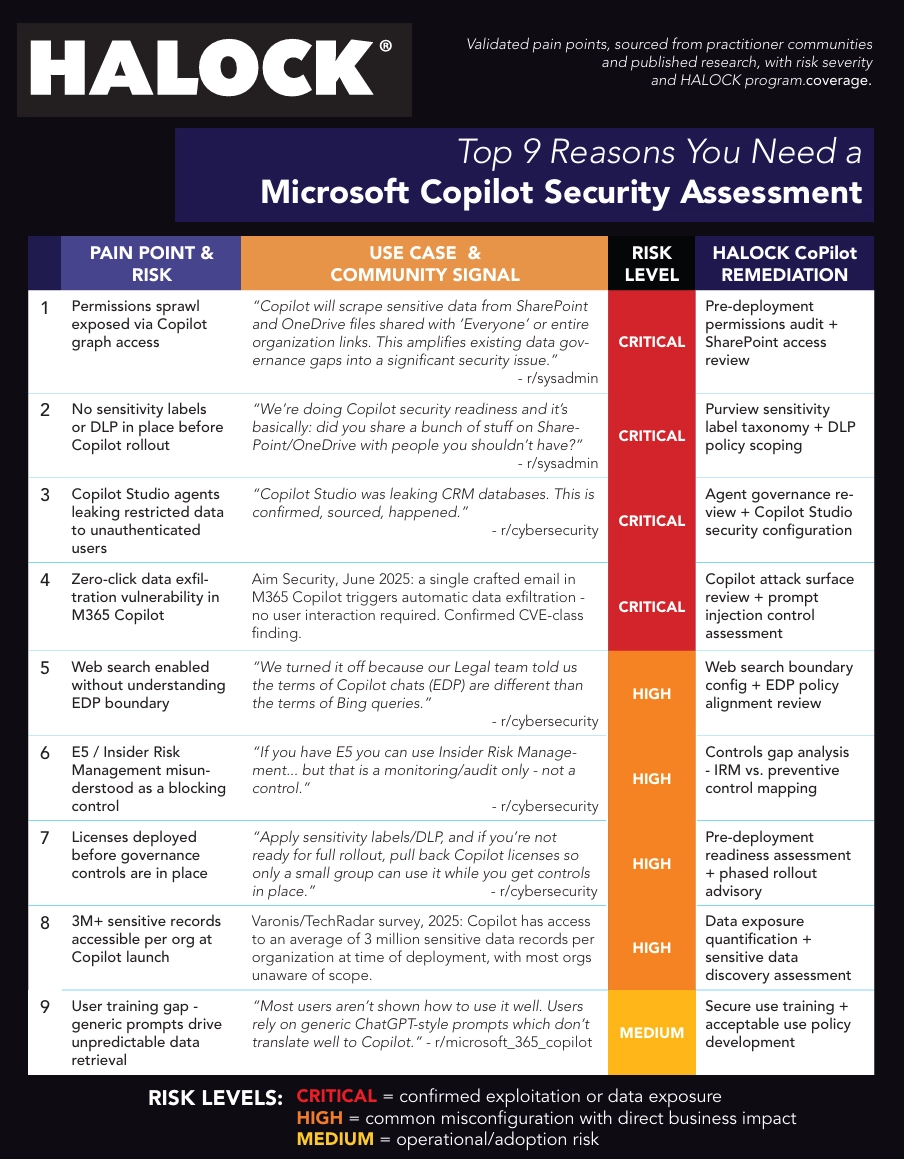

Top 9 Reasons You Need a Microsoft Copilot Security Assessment

How do you address the risk painpoints of CoPilot?

Review Your AI Security and Risk Posture

Review Your CoPilot Security Position

Read more AI (Artificial Intelligence) Risk Insights

TRANSCRIPT

Okay, everybody. Thank you for joining. I really appreciate it.

We’re here to talk about approaches to managing AI in the enterprise.

This is a, you know, like a twenty-five-minute thing, and we’ll go through as much as we can.

I do get kind of verbose, so hopefully, we don’t go over a lot of time or miss anything. So we’ll jump right through here.

Just to start off, HALOCK, who we are, we were founded in 1996. We are a services company, dealing entirely with security. So we do risk compliance, penetration testing, cloud attack path modeling, and now we’re jumping in, obviously, very full force with the AI world. There is a, you know, obviously, a whirlwind of information coming out from AI and what’s going on and what’s happening. And, you know, we’re just trying to play catch-up here. We also have a strong engineering group and a strong forensic and incident response, which I am part of.

K.

Speaker, me, that’s me with my baby beard. I’m a solution architect, senior senior engineer for the CSEF group. That’s cybersecurity and forensics engineering. I’ve been doing this for thirty years, nineteen ninety six, honestly. So it’s been a long time. I’ve seen a lot of different situations and fads come through, but AI is definitely not a fad, kinda like the Internet wasn’t a fad. K?

About this webinar, what are we gonna kinda do here, and who is it for, and everything like that?

Right now, this is for pretty much anybody. IT, risk, security, compliance, and governance leaders understand what’s, you know, kinda out there as far as management of AI. The format is pure educational. It’s twenty five minutes presentation with a Q&A at the end.

Practical approach. We’re not talking about products. There are products that we bring up in certain situations, but those are native applications. And then the outcome, what we wanna what you to get out of this is understanding three AI management models.

Right? I mean, there’s crosstalk between some of those things, but for the most part, these are the management models that you want to kinda understand and how they can be utilized, and then, you know, what your choice would be based on that information.

K?

Next one. So I don’t think anybody is surprised by this prompt or this slide. AI adoption is outpacing the ability to manage the, you know, the capabilities of AI or the information of AI. These are six big blocks, major blocks that are happening within the world, and what’s going on.

One is data leakage. Right? I mean, it’s talked about with everybody. Data is going out, and we don’t know where it is.

It’s in its, quote unquote, AI. Right? In the LLMs, what’s being taught is what it is. You know, the big thing with ChatGPT, a long time ago with the free version, is its training.

It’s learning. It’s doing all that kind of stuff. That’s a big, big issue. And you’ll see this actually, that block through a lot of here.

ShadowAI, people are using. How do I get visibility into what people are using? Oversharing. Is my environment set up to protect my data? Audit gaps.

Again, ShadowAI and audit gaps kinda go in the same thing. Where’s my data? How do I know what people have been using? What are they, you know, what information is being sent out?

What is being used? What is being created within my environment? Scripts and all kinds of fun stuff that’s out there. And then leakage, which is a subset of data leakage.

What information is actually being put into these, you know, one-on-one prompts in the browser? How do I get visibility into that and what it is? And then last but not least is compliance risk. How is that compliance being, you know, documented and tracked?

So what is AI management? This slide, I don’t think anybody’s surprised by this slide. It is very, you know, much the thing we’ve been doing for, you know, really thirty years.

Visibility, governance, control, and monitoring. How do we discover what AI tools are out there? How do we protect and govern this information from policies and risk classifications for AI activity? How do we control it? How do we put these technical safeguards? How do we put it into the box that we need it to be in to make sure that we’re protecting our corporate assets and our corporate information from any kind of AI, you know, leakage, or information being accessed that we don’t want it to be? So we wanna be able to control it.

And then monitoring. We wanna make sure all this stuff is monitored, you know, to the ump degree to make sure that we’re tracking everything, all the interactions, everything that’s going on with that. K?

There are three approaches to AI management.

Three main ways, kinda this is what I was talking about in the beginning, are three different scopes. Number one is native platform controls. These are, you know, Microsoft 365 Copilot. These are Gemini controls within, within Google, capabilities that are relying on that.

These are all the built-in controls. You know, Copilot has a laundry list of things to set up prior to ever actually deploying Copilot with, you know, security labels and all this stuff. That’s all native applications, all contained into its little ecosystem. Well, not little, but its ecosystem within the environment, to make sure that it’s controlling that information.

But that is for native apps, Copilot, Gemini, things like that, that can really be controlled in that manner. Number two is browser security controls. This is my point, you know, going into this. And then number three is a dedicated AI control platform where I wanna get specific purpose-built AI solutions that protect me as much as I can, as well-rounded as I can, within my environment, within my area.

Now we’re gonna jump into each one of these, to make sure that, you know, kinda go through them and understand them. And I’ll highlight some of the things that are going on there. So the first approach is native platform controls. Again, Microsoft 365 Copilot governance.

We’ve heard go Copilot all over the place. It’s in every little app that we’re using right now with Microsoft and little buttons on the top, and in Excel and in Word and everywhere like that.

How do we perform that governance? Right? And these are the on the left hand side are the four kinds of things on the left-hand side that we wanna, you know, focus on. Conditional access, Microsoft Purview, that’s sensitivity labels, DLP, data classification.

SharePoint advanced management, so that’s actually, you know, restricts Copilot from accessing SharePoint in a holistic way. So it kinda limits, doesn’t kinda, it actually limits the information that it can go in and, you know, basically read and put into its knowledge base, lack of better term, knowledge base, back-end information. And then audit logging is all in there with Sentinel and things like that, so that we can track Copilot. We can track DLP events.

We could track everything that goes along with that. On the right-hand side, when this fits best. Right? Your organization standardized on Microsoft 365.

You use it. You live it. You learn it. You love it. That’s the thing that you’re using.

You have existing e three, e five licensing.

Identity and governance are in the Microsoft ecosystem. You don’t have any other things that are, you know, running around or, you know, using maybe PIM for, you know, privileged access management and all this kind of stuff instead of outside vendors or outside capability.

Compliance requirements are tied to Microsoft native controls. You’re using Sentinel for your SIEM. You’re using Purview to monitor all of your assets, as far as data and data leakage and everything that’s going out. And then you’ve got an IP, an IT team, excuse me, IT team that is experienced with Microsoft admin tools.

It is, you know, limited coverage of non-Microsoft AI tools. I think we all understand that that information’s there. Browser security controls. This is, you know, the browser is your way in.

Right? Now it’s kinda like your operating system, almost. Shadow ID shadow AI detection, copy and paste upload controls, session activity monitoring, role based access control. Being able to control those things at that point of interaction between our customers, which are our, you know, our people, our employees, being able to kinda, you know, access that information and protect them from themselves or from anybody else being able to do anything to them.

This is multisask environments beyond Microsoft, you know, all the kinds of things that are out there.

Shadow AI is the primary concern. I bought ChatGPT. I wanna use ChatGPT. Everything else is getting locked down. That’s where you wanna kinda control that. Cross-platform AI visibility quickly. So I wanna be able to kinda see everything that everybody’s going between all the other AI visibilities or AI infrastructures.

Limited infrastructure change and tolerance. This is a browser plug in goes right in there. And then users access AI through many browser-based tools. So you can access, you know, all that information through the browser.

Focus on the interaction layer, not data at rest, governance, and things like that. So it is a very specific point in time, you know, using that browser to do that. Dedicated AI controls are AI discovery, AI interaction oversight, policy management, and governance. This is really tightly integrated with your environment, controls a lot of the environment to make sure that you’re not allowing AI to kind of run wild, and you’re protecting your people.

It’s got deep integration into your environment, which can be utilized for governance things. So, AI governance strategic priority, you gotta have governments, you know, governance. I’m, you know, I’m kinda HIPAA compliant, or I’ve gotta be some kind of compliance. I need that to make sure I’ve got all that.

Existing tools lack AI-centric controls. So these are, you know, you’ve got a browser. You’ve got other things that are out there, you know, Intune, all that kind of stuff. But this really takes all of that stuff out and says, I’m doing it all with this one tool and I’m able to see it from an AI perspective.

Purpose-built AI controls. This is a newer category. It, you know, requires additional integration effort. So it is a little bit heavier.

You know, you gotta integrate to firewalls or edge edge, you know, proxies and log systems and SIM and all that kind of stuff. But it is a very, very good solution as well.

And that’s kind of the three that are there. Now, just to look at the AI management, you know, kinda approach the comparison. Right? So, Visibility AI is native.

We’re talking about Copilot here. Microsoft only.

Browser security, you know, we’re looking at, you know, visibility is only the browser, and the dedicated platform is a broad AI discovery. So if you’re kinda worried about visibility, you know, you might wanna go to a dedicated AI platform or just lock down to Microsoft only. So you gotta kinda slice and figure out where you wanna be. Data movement control, obviously, with Microsoft and security labels and auto-labeling and all that kind of stuff, you can document where data is.

Dedicated AI platform, AI interaction layer, so you only know about what that AI is doing. So you’re not really super familiar with what’s going on in the endpoint, but you built rules and DLP structure that says, I don’t want this type of data going out. You can also use, you know, masking and or labeling and stuff like that from native tools like Microsoft Purview. Third-party AI coverage, Microsoft, and then purpose-built for others.

Shadow AI detection, deployment complexity, Microsoft dependencies, and then the best fit you best fit use case. You could see that these are at the bottom. So if you’re going Microsoft standard, you’re going Copilot, you’re in. Right?

It’s, you know, a great solution. It’s got, you know, back end or all that kind of stuff, and being able to protect is really good. Browser security is, you know, I wanna give my employees the tools to go out and do their work, quickly, efficiently, and still protect them. And I wanna lock them down to the browser.

And then a dedicated AI platform. You’re gonna use AI. I know you’re gonna use AI. Let’s lock it down to what we can control using this dedicated platform so that our people can get their work done.

K?

Let’s see here.

Why a what AI management, and what it is not? AI management is not only about blocking tools. Right? It’s not the edge block, you know, clawed, block, block, gbt. It’s not about that.

It’s not solved by a policy document alone. Right? You can’t just say, “Hey, don’t use x”.

We know that doesn’t work. Fixed by enabling one security feature, there’s no silver bullet. Right? Even if you look at the Copilot, say, wanna implement Copilot and that, there’s a laundry list of things just to get started prior to even enabling Copilot, just to make sure that you’re secure.

One-time configuration exercise. Now you’re doing this constantly. It’s always an evaluation process. You’re always reviewing because AI, I don’t think I’m you know, make anything up here, but AI is a massive speed boost.

Right? So being able to kinda, you know, build things, get things, script things, everything to where it’s at, is incredible. It’s every day, we’re finding something new to go at and look at. It is not the same as traditional IT security.

It’s just not. We’ve gotta think differently. We’ve gotta think box really and be able to kinda, see how this is about, you know, evolving. I just saw somebody on TV today.

You know, he’s going on about how AI is evolving, and, you know, if we’re gonna be the AI pets in the future. I mean, it’s like, it’s crazy how this has taken over the world and taken over people’s minds, as far as thought process and everything. It’s really, really, really a pretty substantial tool. AI management is a repeatable program, not a product.

Right? You gotta have a holistic view. How do I manage AI? I mean, we got an assessment for AI now, all that kind of processing.

Aligned to business risk and use cases.

I want my people to use AI. I want them to be able to get, but I gotta keep it in the realm of how we’re gonna work and do our business. So we can’t just kinda go wild, wild west like everybody else, and deploy OpenCLO to everybody’s desktop.

Calibrated to your ecosystem and tools. Right? I have tools that I use. I have the environment that I use, and it does our business.

Now, how do I either wrap it around or intertwine AI so that it’s secure? It’s, you know, it’s monitored. I get the visibility, everything that goes along with that. Again, ongoing evolution with AI adoption.

Just since, you know, November, December even of last year, nobody knew, you know, Claude Code. Right? Now Claude Code is, if you don’t know what Claude Code is, you’re kinda, you know, behind this, you know, the behind the eight ball already. It’s just crazy. It’s moving so fast. And with the cloud code leak, it’s pretty incredible what’s coming out.

It’s a shared responsibility. Right? Everybody’s gotta kinda pull their, you know, pull their little tab on on the environment so that we’re all making sure that we’re going in the right direction. K?

Implementation considerations. Where to begin? Right? Build your AI management program. Discover AI usage. Classify use cases.

Assess exposure. What’s all my data? Where is it going? Who’s using it? Who’s touching it?

Are there any scripts? All that kind of stuff. Choose your control model. How am I going to control this massive, you know, undertaking?

Select native browser or dedicated AI controls, or I mean, that or is a pretty big substantial, or a combination of them all. There is, you know, a definite thread thought process for having, you know, native controls and browser in the same or native controls and a and a substantial AI dedicated AI controls in the in the same in the same area, in the same company doing that. There’s, you know, no reason for that not to happen. Pilot first.

Obviously, deploy and monitor mode to baseline usage before applying blocking policies. I think that’s what you know, we’ve had that for centuries, it feels. And then monitor and refine. Right?

So you’re always refining. You’re always looking at stuff. I mean, we have a tool, I won’t mention it, but that goes out and categorizes MCP servers, goes out and looks at all the AI LLMs that are out there, and any kind of scripting stuff that’s out there. And, you know, it’s just unbelievable what’s out there so fast.

So being able to monitor stuff that’s happening and then refine and refine and refine so that we’re always moving, always, always making sure that we’re better at things, as things go.

K? And then organization roles and collaboration, IT, privacy and compliance, business owners, security, legal, governance, all play a role in making sure that AI, you know, AI or AL, some of the people are calling it.

AI is actually governed properly. It’s intertwined into the indications if need be. It’s not put in some place just to say we’re using AI. So being able to, you know, kinda put all that information in there.

All these teams have to work together. Privacy, compliance, legal, business owners, and governance, making sure we’re all doing the same thing, IT and security. Right? We can’t be the you know, as from the security, we can’t be the group of no.

We gotta be the group of, hey. Let’s figure out how to best do this so we can get that done.

Oh, this is a there’s a poll. Okay. So I went through that way quickly. So there is a poll at the end here for people to kinda take and look at.

And the poll is kinda, you know, where, where in the AI management spectrum do you guys fall? Right? So what is your, you know, kinda what is your you know, where are you at? Are you just beginning?

Are you discovering AI use across your organization?

Are you defining government?

See if I can minimize that. Sorry.

Are you managing data leakage and privacy risk, and are you monitoring third-party and shadow AI tools? We’re just curious to see where you’re at, just in kind of a general, you know, sense. All of these are, you know, our customers, our people that we’re working with, people we’re talking to, are all in various stages of AI in some aspects. Some are just kinda getting started, some are a little bit farther along, just trying to figure out what, what’s going on. And making sure that we understand, you know, how to really move things forward and make sure that there is information that’s there for you.

How do we get to see the ah, there it is.

Defining governance and acceptable use policy. Alright. Seven out of fifteen. That’s, you know, only there’s fifty percent of you guys, which is really, really great. And again, that’s where everything’s gotta start, defining governance.

You know, obviously, if you know HALOCK, if you know who we are, governance is one of our, you know, you know, tent poles. Right? So being able to figure out what, what’s going on there is very, very, very important from the governance perspective because you need to be able to have that foundation. How are we gonna use this?

How are we gonna have that? And that is the piece of AI management like I had before. The governance piece of that is all through that. So you guys are in a good spot there.

I think there’s one sorry.

There was one more.

Thought there’s one more. Okay. And the conclusion and what’s next. So, in conclusion, you know, native controls and secure AI within their own platforms. You know, dedicated AI control is purpose-built oversight, and then the browser controls are, you know, pulled for that point in time.

Just understanding what those three levels mean or three kinda deployments are, and then making sure you kind of, you know, either geared towards those or use those or move to that information so that you’re you’re, you know, building on a, you know, a a a good platform and making sure that you’re going through the best process for your environment.

So what’s next kinda for you guys? You definitely wanna, you know, evaluate AI usage across the organization. Doing a discovery of AI. We’re doing these here at Haylock all the time.

Putting in either a purpose built stuff or using logs and things like that to kinda figure out what people are going. And it’s surprising. Right?

Figuring out or finding, excuse me, discovering what your folks are really doing is incredible because some of these folks are really, really engaged, and they’re really on the cutting edge, and they’re really trying to, you know, need stuff. So, figuring out how to kinda make sure that you control that. Define AI governance and acceptable use policy assigned to business needs. You know, you wanna make sure that you’ve got that identified.

And, you know, it’s fifty percent of you said, yep. We’re doing that. So we wanna make sure that you’re on that thing. Determine whether native browser or dedicated AI controls fit your environment.

Again, so once you’ve done that evaluation and discovery, you’ve kinda defined your governance. Now you wanna determine how I wanna protect this environment, and moving forward, what’s the best way? And then last but not least, consider an AI risk assessment AI management road map as your next concrete step. Right?

So like I said, kind of intertwined here, HALOCK does offer a, you know, a specific AI risk assessment, aside from our normal risk, you know, assessment kind of process. And we also help you from there in the CSEF group, of kinda, you know, AI management road map. Where do you wanna go? How do you wanna do that?

You know, asking you the questions and helping guide you along the process of which one of those three pillars you should be using, and the capability that’s there.

Again, not sure where to start? Contact us. We’d be happy to help you. Discuss any AI governance assessment or AI management road map.

We have a ton of stuff up on our website for information, as far as you know, things for Copilot. We’ve launched some Copilot stuff and everything like that. Just please go ahead and take a look, and let us know. Be happy to talk about this and everything.

Obviously, this is a very superficial, not super, but high-level webinar. We’d love to get into the weeds, talk about specific things, ChatGPT, you know, and Anthropic, you know, capabilities and what’s there, and Copilot and how that interacts with your environment. We’d love to talk about all that stuff. K?

Last five minutes. So, by my thing, I did it in twenty-four minutes and thirty-eight seconds, so that wasn’t too bad. Are there any questions or anything anybody has? I hope that I can hear them. I’m not a hundred percent sure.

K.

No. I don’t hear anything.

No questions or anything that goes along with that?

Steve, I think there was one more poll at the end.

Oh, was there?

I think so. Yeah.

Sorry about that.

Ah, okay. My bad. Attendee second poll here.

So k. So it’s gonna attendee poll. What topics should we discuss next? This is a really good one. Sensitive data governance approaches because it builds on everything that goes on there.

Compare and contrast cloud assessments versus penetration testing, which are really two, obviously, two separate things. How to do an AI risk assessment, a Microsoft Copilot deep dive, which I would love to talk about, and then, if there’s anything else that you guys wanna discuss.

And, Steve, we did have one question, Oh. Come in. So the question is, what are some examples of how not watching your AI can hurt a company?

Well, data leakage. Right? Anything that you have that might go out.

If you don’t understand what your people are doing or what information is there, you can leak out all of your information very, very quickly. People can, you know, kinda, if somebody’s not messing around with AI, let’s just say, and they’ve gone to Claude code, they type in Claude code and say, give me a script that helps me, you know, pull down information. They can inadvertently create a script that also interrogates their machine because they didn’t give enough criteria that says, “Okay.” Well, I’ll just, and this has happened.

I’ll just find out what’s in this directory, build a, you know, a prompt based on that, and then kinda scan for that. So now the AI has scanned your local machine and then gone out and tried to find that information. So that would be information that would go out into the prompt, and then that AI would then have that information as far as what’s local to that machine or that directory that the person’s trying to run out of. So there are a lot of cases like that.

I mean, OpenClaw, all those things that are out there.

You know, Clawd’s just launched Cowork as far as, you know, being able to kinda do your stuff and schedule your stuff and be able to control that.

So there are those that would be, you know, big kinda data leakage points that are going on there.

Yeah. I have a follow-up for you. Sure.

With the three different approaches, how granular what kinda information can you get about the AI usage?

Like, how granular can you get to the prompt level?

I can find out with the native with native AI stuff, with the browser, and with the complete AI of option three integration. You can go down to the prompt level. So I can figure out, I can find and track everything that’s being typed into the prompt. So give me all the information about all my lead HR peep or all my executives and their, you know, salaries.

All that information can be tracked within that Gradle infrastructure so that I can see everything that the prompt is going through. I can see where they’re going, you know, what AI they’re using, what the prompt is, what information they’re going into, what locally they’re trying to kinda access. So information like that, especially with the native stuff in the Copilot, I’ll find out exactly what, you know, what Copilot’s kinda trying to look at.

Alright. Thanks. There are no more questions.

Okay. And, again, if anybody wants to talk about, you know, Copilot, ChatGPT, or, you know, OpenAI or Anthropic or anything like that, I’d love to have that conversation. It’s incredible. I love talking about this and trying to, you know, kinda pass information back and forth. You know? There’s a lot of, you know, good information. There’s a lot of, you know, really, really, really fast, stuff that’s happening that is unbelievable.

So just trying to keep up with all this is incredible. There are a lot of resources out there. There’s a lot of people out there that are are putting out demo or videos and stuff like that, and we’ll have some ourselves that you can kinda tap into and and blog posts and everything like that that, we’ll keep on, you know, you know, deploying information and kinda try to get you to, you know, think about, you know, what it is with AI and security. You know, our people are definitely, you know, using it.

And when I say our people, I mean employees and everything like that. I mean, you know, my eighty-three, eighty-four-year-old mother knows about, you know, AI and is using it. So, I mean, it’s everywhere, and it’s not going anywhere. So please reach out.

We’d have been happy to have a conversation with all of you.

K. I think that’s I think that’s all I have. Yeah. It’s all the time I have.

Well, thank you so much, everyone, for joining. I really, really appreciate it. Thanks for spending your, some of your lunchtime with me. And then if, again, if there’s anything else you need, please don’t hesitate to reach out to me, Erik Leach, or anybody at the HALOCK team.

We’d be happy to discuss.

K.

Thank you.