By Cindy Kaplan

Companies are adopting artificial intelligence (AI) into processes, operations, workflows, and decision-making faster than ever before. But here’s the dirty secret….the rush into AI is outpacing the management of its risk.

Organizations are adopting AI much faster than they are securing it.

AI is becoming enterprise shadow IT. Employees are pulling up publicly available programs to analyze data, write code, summarize contracts, and compose emails. Most employees are doing this without official clearance or approval. It seems convenient. It saves time. But it also creates vulnerabilities. If you don’t know what tools are being used, what data is being shared, you can’t protect it. Many executives believe no sensitive data is being fed into these programs. But this is rarely the case.

The result is not just risk. It is an unknown risk. That is a much harder problem to manage and an even harder one to defend.

The Market Problem: AI Adoption Without Due Care

AI has taken on a role that looks a lot like shadow IT. Employees are using publicly available tools to analyze data, write code, summarize contracts, and draft emails. In many cases, this is happening without formal approval or oversight.

It feels efficient. It saves time. It also creates blind spots.

If an organization does not know what tools are being used or what data is being shared, it cannot protect them. Many leaders assume sensitive data is not being entered into these systems, but that assumption rarely holds up under scrutiny. “Shadow AI” creates a fundamental issue: organizations cannot protect what they cannot see.

Real-World Incidents: When AI Risk Becomes Reality

One of the clearest examples came from Samsung in 2023. Engineers entered confidential source code and internal meeting notes into ChatGPT while trying to troubleshoot issues. That information left their controlled environment and could not be retrieved.

Why this should matter to you: This was not a cyberattack. It was a normal action taken by employees trying to work more efficiently. The consequence was permanent exposure of proprietary data.

Relevant Regulations

- FTC Act (failure to safeguard consumer/business data)

- CCPA/CPRA (data protection obligations)

A further example of AI being used in social engineering. In 2024, a business was tricked out of $25 million when an employee participated in a video call with the CEO and others whom they believed were company executives. All the people on the call were deepfakes made with AI.

Why this should matter to you: This is phishing evolved, and AI removes traditional red flags. The usual warning signs were not there. The voices matched. The visuals matched. The context felt real.

Relevant Regulations:

- SEC Cybersecurity Disclosure Rules

- GLBA Safeguards Rule

- NYDFS Cybersecurity Regulation

Phishing has also become more advanced with AI-enhanced phishing at scale. AI can now generate emails that mirror tone, writing style, and even internal communication patterns. Messages can feel familiar enough that employees do not question them.

Why this should matter to you: Traditional awareness training alone is no longer enough when the messages themselves are this convincing.

Relevant Regulations:

- HIPAA Security Rule

- FTC Safeguards Rule

- State breach notification laws

The Core Risk: Unknown Exposure of Sensitive Data

A common statement from organizations is that they are not putting sensitive data into AI tools. Without monitoring or controls, that statement is difficult to prove.

AI systems introduce new challenges. Data can be unstructured. Processing often involves third parties. Outputs are not always predictable. Logging and audit trails may be limited depending on the tool. Taken together, this creates one big problem. Organizations aren’t aware of what data was exposed and where that information was distributed. When it comes to regulations, that lack of awareness is an issue.

The majority of U.S. regulations require reasonable safeguards. We see that expectation in FTC enforcement actions, state privacy laws like CCPA and CPRA, and sector-specific laws like HIPAA and GLBA. If you can’t prove you knew what you were up against and made a good faith effort to manage your risk, it’s difficult to prove your safeguards were reasonable or appropriate.

The Methodology Behind HALOCK’s Risk Solutions

Perfect security does not exist.

Security is about knowing your risk and making smart, reasonable decisions. HALOCK Security Labs uses a methodology for all risk solutions called Duty of Care Risk Analysis (DoCRA). It allows you to reduce risk to the point where it won’t cause harm to your organization while still letting your business run.

By approaching risk using DoCRA, you can map your security decisions back to legal standards, assess the severity of potential harm, and document your rationale for why you implemented the controls you chose. That documentation becomes critical if an incident occurs. DoCRA has been used in litigation support, expert testimony, and breach analysis. Documenting decision-making can be just as important as controls.

End User Risk with AI: Practical Scenarios

The riskiest activities don’t necessarily come from attackers. Here are some common internal issues with AI use that could be risking the security of your data.

AI USE CASE 1: EMPLOYEE

EMPLOYEE: Improper Handling of Confidential Data

RISK: Copying and pasting client or financial data into a public AI service to get answers more quickly. An employee replies to a client on a basic question, such as the client requesting the status of their project. Using AI, the employee easily gets the client name, project list, pricing, schedule, and goals. Employee requests AI for a summarization of the client engagement so a clean summary can be provided. All this private data gets loaded into a public AI tool, which then makes this once-private data accessible via the AI tool. While not intentional, the employee risks losing the job, damaging the client relationship, and bringing about legal issues, as the guarded data is now out there. And now the company has no oversight on its data or how it will be used.

POTENTIAL HARM: Data exposure and brand reputation

AI USE CASE 2: DEVELOPER

DEVELOPER: Leaking Source Code

RISK: Pushing code to a public AI tool to help debug a problem. The developer uses a public AI tool for speed to complete the project. That AI tool then takes all that valuable information and processes it. This data is out there to access. The data may reveal system structure, naming conventions, endpoints, or proprietary source codes that competitors can replicate or hackers can exploit.

POTENTIAL HARM: Theft of intellectual property (IP) and competitive disadvantage

AI USE CASE 3: LEGAL

LEGAL: Using AI to Review Contracts

RISK: Using an AI tool to summarize contracts without considering data security. Employee uploads a document for AI to summarize, edit, and refine language for a report. The document could be a contract, NDA, or SLA. AI can help review materials, but it does not mean it is reviewing in a secure environment. Sensitive information, such as pricing, liabilities, or termination clauses, can be included in an email summary for the team. That email can be forwarded quite easily externally and exposes any negotiation advantages or issues with named entities. This is problematic and will require damage control.

POTENTIAL HARM: Breach of confidentiality and potential fines

AI USE CASE 4: CUSTOMER COMMUNICATIONS

COMMUNICATIONS: Using Generative Replies with Customers

RISK: Sharing information about the internal workings of the company through generative replies. An employee on the support team uses AI to draft a response to a customer issue with their software, and the employee provides general prompts to provide an answer. Prompts should be structured so that only specific information is shared. AI replies based on the patterns learned, but it may not consider your company’s confidentiality policies. AI may include details about internal issues or workflows, even referencing other vendors.

POTENTIAL HARM: Unintended sharing of information or exposing company-specific challenges

These are all scenarios that can happen without negative intent. They’re realistic. They make sense. They save time. But each introduces a potential exposure. That’s why governance shouldn’t be limited to blocking tools. Policies, training, and most importantly, an awareness of realistic employee usage should guide governance practices.

Artificial Intelligence Regulations to Watch (US)

While regulations are still emerging, we do know what to expect.

FEDERAL

At a federal level, most are referencing the NIST AI Risk Management Framework as a starting point. The 2023 Executive Order laid out the roadmap for federal regulation and safety. The FTC has already begun enforcement actions related to the misuse of data and the deceptive use of AI.

STATE

On a state level, there are already several laws in place or set to go into effect. The California CPRA includes a provision on automated decision-making. Colorado’s Artificial Intelligence Act will go into effect in 2026. Illinois residents are still able to sue over violations of BIPA, which impacts some biometric technology, including facial recognition. New York City demands bias audits for certain AI hiring technologies.

More proposals are being considered in a number of states. And there will likely be more federal regulations in the future. The message is clear, though. Regulators expect organizations to understand AI risks and manage them appropriately.

April 26th: AI Awareness Day

AI Awareness Day is April 26th. It serves as a great opportunity for companies to pause and evaluate current practices.

Review how AI is being used. Update acceptable use policies. Provide employees with training on potential risks. Ensure training is centered around how employees are actually using AI, not perceived threats. Use a framework such as DoCRA to help guide decisions that can be validated to regulators if needed.

Final Thought

AI is changing how organizations operate, but it is also changing how risk shows up. The organizations that handle this well will not simply be the fastest to adopt new tools. They will be the ones who can clearly explain what they did to manage risk and why those decisions made sense at the time.

That’s where HALOCK and DoCRA can make a difference.

Review Your AI Security and Risk Posture

Review Your CoPilot Security Position

FAQ: AI Security and Risk

Can I trust ChatGPT with business data?

Using public AI services without clear guidance and controls is risky. Not all tools can confirm how your data will be stored or if it will be reused.

What is Shadow AI?

It refers to employees using AI tools without formal approval or oversight.

What is the biggest AI security risk today?

Lack of visibility into sensitive data exposure.

How can organizations make AI use defensible?

By applying a structured approach such as DoCRA, documenting decisions, and aligning controls with the level of risk and potential harm. Using DoCRA helps establish reasonable security.

Reasonable security and duty of care are established when your security program incorporates your decision-making with your organization’s mission, objectives, and obligations.

AI Cybersecurity Resources

1. WEBINAR. Approaches to Managing AI in the Enterprise: A Practical Guide to Governing Native AI, Browser-Based AI, and Third-Party AI Tools.

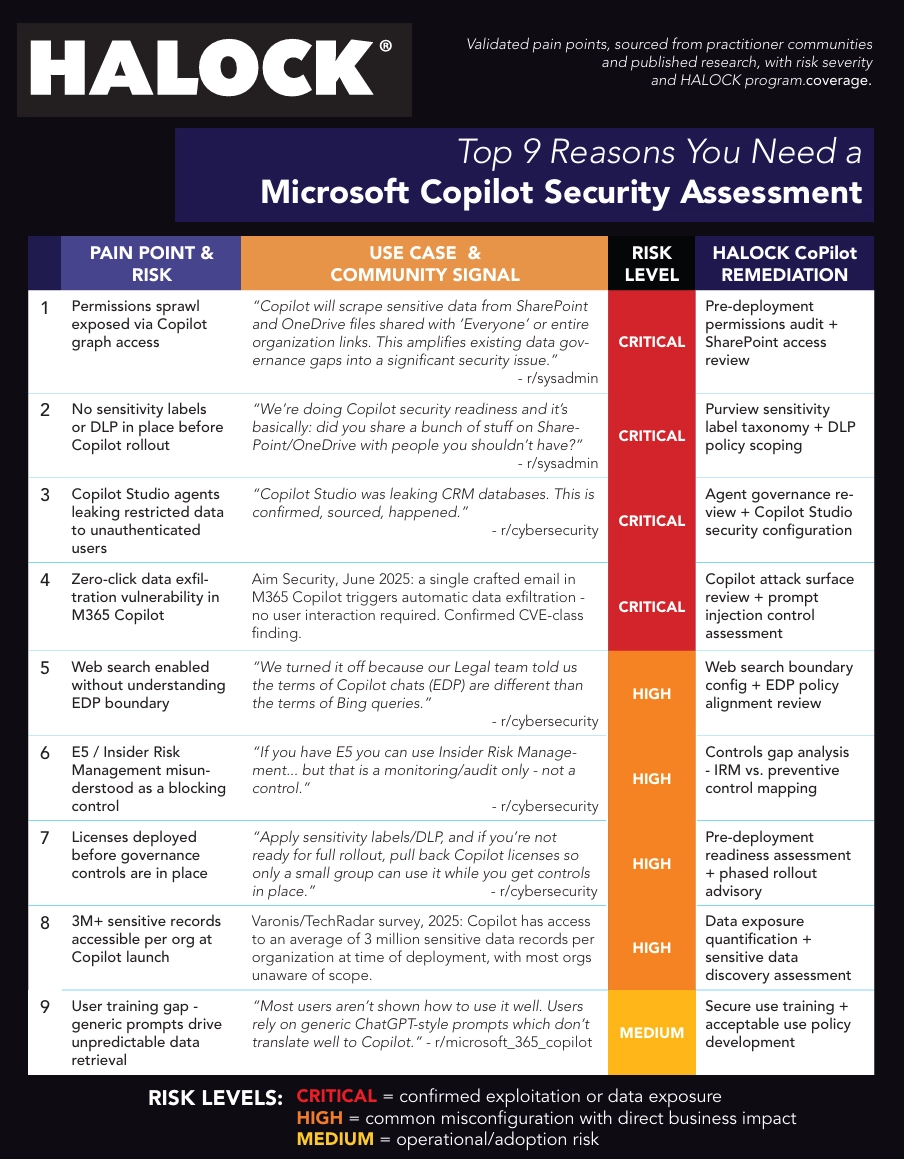

2. RISK CHART.Top 9 Reasons You Need a Microsoft Copilot Security Assessment

Review Your AI Security and Risk Posture

Review Your CoPilot Security Position

Read more AI (Artificial Intelligence) Risk Insights